Why purpose-built analytics tools beat Optimizely / VWO's A/B test tracking

We typically find that relying just on Optimizely, VWO or Convert.com's A/B test tracking has hidden costs:

- Restrictive analytics capabilities

- Worse site performance

- Increases your compliance obligations & compromises your data sovereignty

In our experience Analytics tools like GA and Snowplow are more trustworthy and full-featured. And, at Mint Metrics, all experiments get tracked into both GA & Snowplow for clients. We no longer use or trust SaaS testing tools' built-in trackers.

Here's how purpose-built analytics tools lifts your split testing game...

Better analytics capabilities & data quality

SaaS testing tools make excellent reporting interfaces and WYSIWYG editors, unfortunately analytics isn't their core competence.

1. Perform more sophisticated analytics

It's likely you're tracking everything into Adobe, GA or Snowplow. And any data that's not in their analytics tool can be easily joined onto via a customer ID or transaction ID. This opens your experiments up to measuring a wider range of outcomes such as:

- Customer loyalty / value

- Fraud reduction

- Enhanced ecommerce behaviour

- Any metrics with sensitive customer data

2. More robust & better-audited analytics solutions

Which tracking tool do you understand better? GA's or Optimizely's?

All our clients audit their Analytics tools and have many eyes reviewing the data in GA or Snowplow. Yet no one we know has had their split testing tracker audited. More often than not, SaaS testing trackers fail in ways you don't expect.

Bot traffic - the most common issue

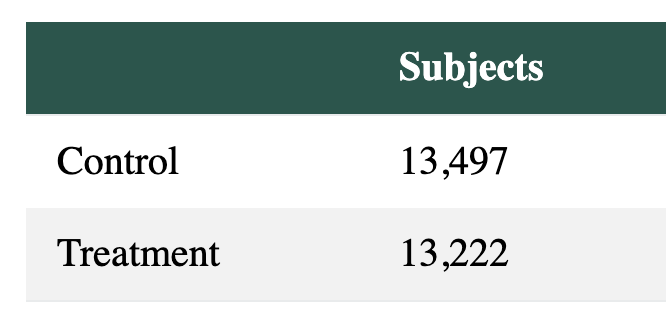

We often see SaaS trackers report counting bot traffic. In one case, a SaaS tool reporting a stat-sig. -70% decrease in checkouts for a finance client:

A test we ran on Convert.com once tracked 150% more users than tools like GA and Snowplow...

Above, Snowplow data. With bots filtered out, the entire outcome of the test shifted drastically.

After we excluded bots, our purpose-built Analytics tools reported that checkouts were neutral. What a scare that was!

Speed up your site

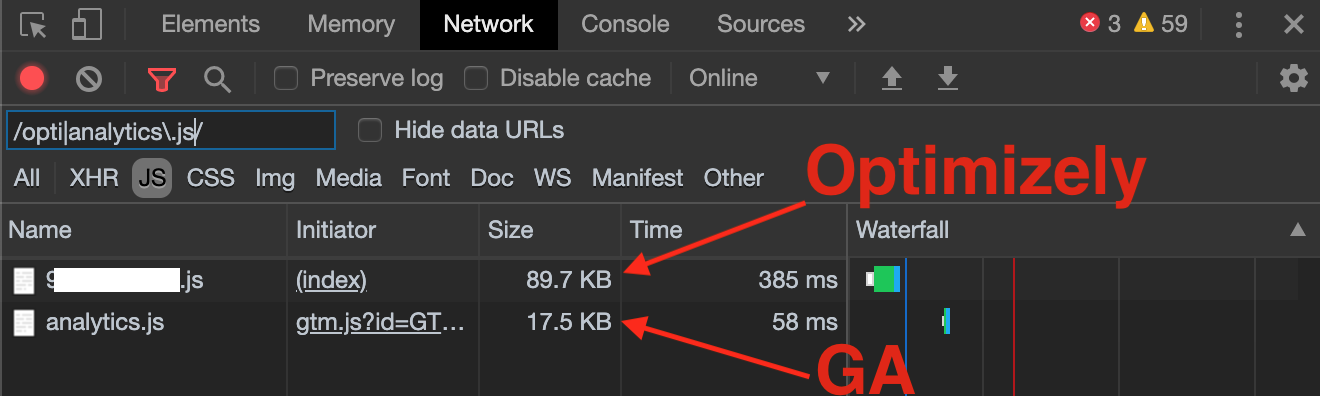

Google Analytics' tracker weighs in at ~17.5 KB (and that's quite lightweight for a tracker). Imagine loading that much extra data synchronously every time!

If you're using a SaaS testing tool (whether that's Optimizely vs VWO vs Convert.com), they come with their own built in trackers. You're carrying a bunch of dead-weight on your pages.

3. Reduce your A/B testing container weight

When you strip out the tracking, your split testing container shrinks. Now, you'll track experiments using existing tags and tracking libraries rather than loading more in.

Despite most sites including a GA tracker on the page, Optimizely injects their own tracker, and that tracker is loaded synchronously, too.

4. Keep your Analytics libraries well-cached

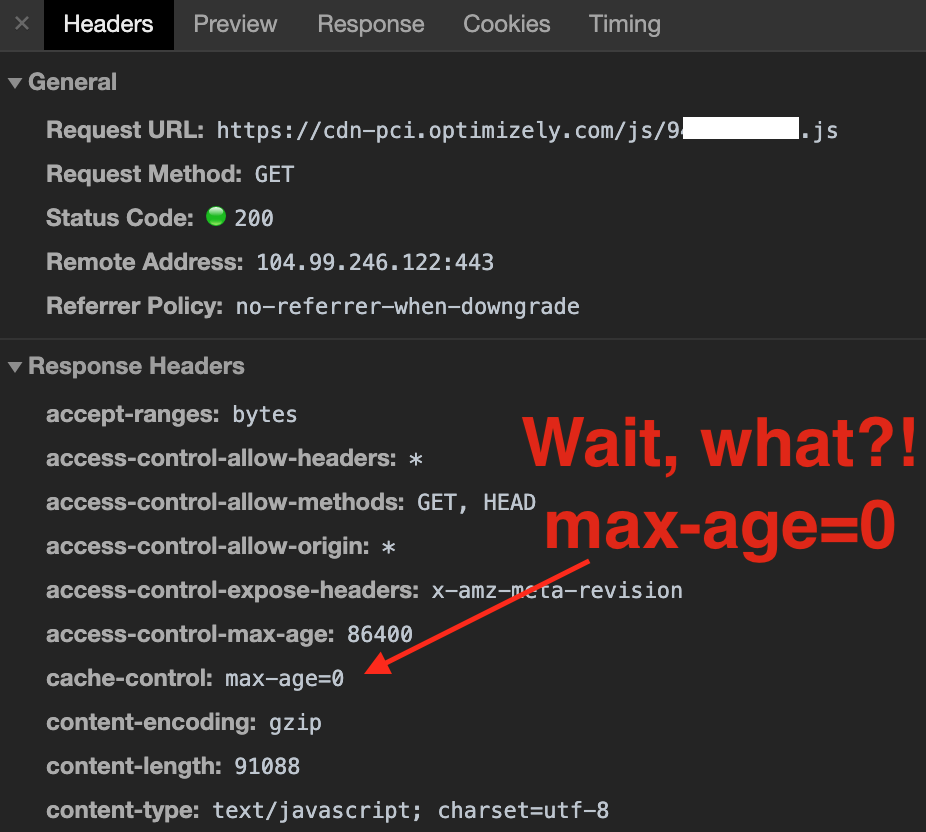

While your split testing container can't be cached for more than a few minutes (so you can launch tests quickly), your analytics trackers rarely change, meaning your users can cache analytics.js or sp.js for months or even years. This is great news for page load times.

It's typical for split testing containers to set low cache-control: max-age to a low value - but you wouldn't expect your tracking library to change this often.

But A/B testing tools often bundle their tracking alongside experiments, adding to the cost of loading their containers.

Stay compliant & keep your data secure

5. Fewer tracking implementations to maintain

Without Optimizely, VWO et al. tracking your users, you'll no longer have to:

- Configure its goals & reports

- Protect users' PII from being collected

- Install extra ecommerce / event tracking

- Maintain IP filters

- Verify the data being collected (we all check that, right?)

Instead, your tracking maintenance, QA and dev can be focussed on your business' source of truth.

6. Ensure you're using data processors compliant with Australian Privacy Principles, GDPR & other policies

Is your testing tool GDPR, APP, etc compliant? Most likely, if you're using it correctly. But no SaaS tracker means no compliance burden.

7. Minimise data leakage from your website

Split testing vendors are fortunately not in the business of selling your data, unlike tools like ShareThis. But having your data stored by yet another company in perhaps another jurisdiction is just one more thing you won't have to worry about.

We're big fans of being data-sovreign, so Snowplow Analytics is our choice of tool.